Azure AI Hub-and-Spoke Architecture: Building Enterprise-Grade AI at Scale

- peterrivera813

- Apr 1

- 6 min read

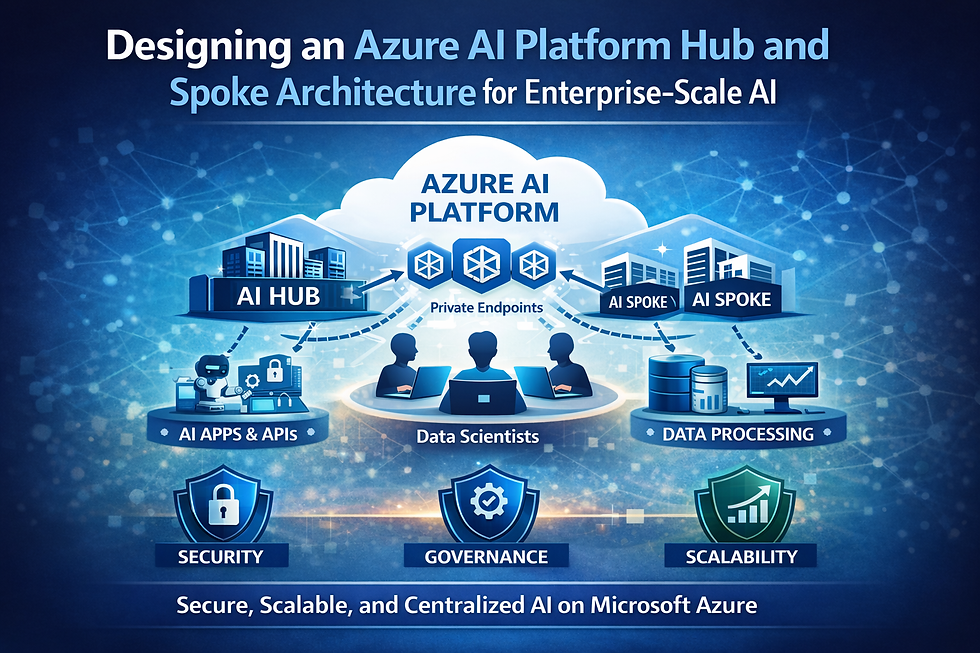

Enterprise AI workloads require a network design that enforces security, enables tenant isolation, and keeps costs transparent at scale. The hub-and-spoke topology is the proven approach in Azure for meeting these demands — centralizing shared AI services in a governed hub while isolating tenant workloads in dedicated spokes. This post covers how to design, deploy, and operate an Azure AI hub-and-spoke architecture aligned to Azure Landing Zone principles, from management group hierarchy through to chargeback and day-two operations.

Understanding Azure AI Hub-and-Spoke Architecture

AI services are expensive, quota-constrained, and regionally limited. A separate Azure OpenAI instance per team is fiscally unsustainable. The same applies to Azure Machine Learning workspaces and Azure AI Services endpoints. Centralizing these in a governed hub — with consumption routed through a policy-enforcing gateway — is the natural solution.

The pattern resolves a core tension: shared infrastructure efficiency (PTU reservations, AI Search indexes, model deployments) vs. tenant isolation requirements (data residency, compliance, access boundaries). Shared services live in the hub; tenant-specific data and compute live in isolated spokes.

Three non-negotiables

End-to-end tenant isolation — network, identity, compute, and data.

Governed traffic flow from user to model endpoint, with full auditability.

Transparent chargeback across shared and tenant-dedicated resources.

Subscription & Management Group Layout

Get the hierarchy right first — it's where governance by inheritance begins and Azure Policy enforcement is anchored.

Management Group | Subscriptions | Purpose |

Platform | Connectivity · Management · Security | Hub VNet, Firewall, DNS, ExpressRoute gateways, Defender for Cloud |

AI Hub | AI Hub subscription | APIM, Azure OpenAI, AI Foundry, App Gateway + WAF — all shared AI services |

AI Spokes | One per tenant / BU / regulated boundary | Tenant-isolated compute and data; provisioned via subscription vending pipeline |

AI Hub vs. AI Spoke: What Lives Where

AI Hub — Shared Services | AI Spoke — Tenant-Dedicated |

→ Application Gateway + WAF (ingress) | → Dedicated spoke VNet (private endpoints only) |

→ Azure Firewall (egress, forced tunneling) | → AKS (namespaces, node pools, or cluster) |

→ API Management (internal VNet mode) | → Azure Cosmos DB (chat history, agent state) |

→ Azure OpenAI / AI Foundry (shared models) | → Azure Storage (documents, vectors) |

→ Azure Machine Learning (shared training + MLOps) | → Azure AI Search (isolated indexes) |

→ Azure AI Services (Vision, Speech, Language APIs) | → Azure Key Vault (per-tenant secrets, CMK) |

→ Azure AI Search (shared or per-tenant) | → Workload managed identity (least-privilege) |

→ Private DNS Zones | → Application Insights |

→ Log Analytics + Azure Monitor | → APIM subscription scoped to tenant product |

→ Azure Policy + RBAC baselines | |

→ Microsoft Entra ID |

AI Foundry terminology: The AI Foundry "hub" resource (shared compute, connections, governance) lives in the AI Hub subscription. Per-tenant Foundry "projects" live in spoke subscriptions and consume shared models via Private Link. Don't confuse the Foundry hub resource with the architectural hub VNet — they're complementary.

Network Architecture

No AI service endpoint is publicly accessible. Azure OpenAI, AI Foundry, AI Search, and all PaaS services use private endpoints only. Public network access is disabled at the resource level via Azure Policy (Deny effect). The only public surface is the Application Gateway (or Front Door for global deployments).

Spoke-to-spoke traffic never flows directly — it always transits the hub firewall. UDRs on every spoke subnet enforce this.

APIM as the AI Gateway

APIM in internal VNet mode is the policy enforcement point for all AI consumption. It does far more than proxy:

Capability | What it does | Scope |

Token limit policy | Enforces per-tenant TPM/RPM quotas | Hub |

Backend load balancer | PTU-first, PAYG spillover; round-robin, weighted, or priority | Hub |

Circuit breaker | Trips on 429s; uses Retry-After header for recovery timing | Hub |

Managed identity auth | APIM → OpenAI via system-assigned identity. No API keys. | Hub |

Token metrics policy | Logs prompt + completion tokens to App Insights per tenantId | Shared |

Tenant context propagation | Validates Entra ID JWT; injects tenantId header downstream | Spoke |

Content safety integration | Screens prompts/completions via Azure Content Safety API | Hub |

Product + subscription model | Each tenant's APIM subscription is scoped to their allocated products only | Tenant |

End-to-End Traffic Flow

User → App Gateway + WAF — HTTPS hits the public IP. WAF v2 evaluates OWASP CRS 3.2 rules, custom rules, bot protection. TLS terminates here.

App Gateway → Azure Firewall — UDR-enforced routing. Firewall Premium applies IDPS signatures and logs all AI-bound traffic.

Firewall → APIM — APIM (internal VNet mode) validates the Entra ID JWT, applies token quotas, selects backend model, routes request.

APIM → Azure OpenAI / Foundry — Call over Private Link via managed identity. DNS resolves to private endpoint IP. Token metrics emitted to App Insights.

AKS agents (spoke) → APIM — Spoke AKS workloads call APIM via the hub private endpoint. Traffic transits hub firewall. Agents authenticate with spoke-scoped managed identity.

Response returns same route — Every hop logged in Log Analytics. App Gateway re-encrypts response. Full end-to-end audit trail.

Security Controls Checklist

Control | Enforcement | Verification |

Public network access disabled on all AI PaaS | Azure Policy (Deny) | Defender for Cloud compliance |

Private endpoints on all PaaS services | Azure Policy (Deny without PE) + DINE | Network Watcher topology |

APIM → OpenAI via managed identity only | APIM backend policy + RBAC (no key auth) | APIM backend config audit |

WAF in Prevention mode (OWASP 3.2) | App Gateway WAF policy | WAF policy review |

Firewall Premium IDPS in alert-and-deny | Firewall policy IDPS setting | Firewall policy review |

All spoke traffic forced through firewall (UDR) | UDR on spoke subnets: 0.0.0.0/0 → Firewall private IP | Effective routes on spoke nodes |

Diagnostic logs enabled (90-day minimum) | DINE policy for diagnostic settings | Log Analytics table check |

Least-privilege RBAC + managed identities | Custom roles; no broad built-in roles on data plane | Entra ID access reviews (quarterly) |

CMK for sensitive tenant data at rest | Azure Key Vault (per spoke); CMK on CosmosDB + Storage | Defender for Cloud CMK recommendations |

Cost Management & Chargeback

Spoke subscriptions host tenant-dedicated resources — directly attributable. Hub resources are shared and require usage-based allocation. Build FinOps in from day one.

Cost category | Attribution method | Tooling |

Spoke PaaS (CosmosDB, Storage, AI Search) | Direct — subscription billing + mandatory tags | Azure Cost Management |

AKS compute per tenant | AKS Cost Analysis (namespace granularity) | AKS Cost Analysis + Kubecost |

OpenAI token usage | APIM token metrics → App Insights customMetrics, tagged by tenantId + model | KQL on Log Analytics; Power BI |

Shared hub infra (APIM, Firewall, APGW) | Proportional: tenant API calls / total calls × shared cost | APIM analytics → Cost Management export |

PTU reservations | Amortized by committed throughput allocation per APIM product | Custom Power BI model |

Tips to Avoid Common Pitfalls

APIM in external mode — External mode exposes the AI gateway on a public IP. Always deploy APIM in internal (private) VNet mode, fronted by Application Gateway and or FrontDoor.

Private DNS zones created per spoke — DNS zones for Azure OpenAI, AI Search, and other PaaS must be centrally managed in the platform hub and linked to spoke VNets. Per-spoke DNS zones break resolution for hub-hosted endpoints.

Resource group isolation instead of subscriptions — Multiple tenants in a single subscription (separated by resource groups) prevents per-tenant Azure Policy assignments and complicates cost attribution. Use subscription-level isolation.

Insufficient monitoring: Without proper diagnostics, performance issues can go unnoticed. Automate monitoring and alerting.

Summary

Hub-and-Spoke gives enterprise AI platforms the structure they need: shared models governed through a central control plane, isolated tenant execution environments, and full end-to-end auditability. It is not the simplest architecture to stand up — but it is the right foundation for AI workloads at enterprise scale.

Governance by design The management group hierarchy means security controls are inherited, not manually applied. Private endpoints, WAF enforcement, diagnostic logging, and mandatory tagging are in place before any workload is deployed. Teams inherit a secure baseline rather than building one themselves.

Shared infrastructure, tenant-level accountability Azure OpenAI, Azure Machine Learning, Azure AI Services, and Azure AI Search are centralized in the hub — purchased once, governed centrally, consumed by many. APIM's token metrics policy ties every API call back to a tenant and cost center. Chargeback is accurate from day one, not retrofitted later.

Isolation without duplication Each spoke is a clean boundary — its own subscription, VNet, AKS tenancy, Key Vault, and data stores. A security incident in one spoke cannot propagate to another. Compliance requirements that differ between tenants are handled at the spoke level without affecting the shared platform.

Scale on demand A new tenant is a pipeline trigger. A new model is an APIM backend registration. A new region is a spoke subscription peered to a Virtual WAN hub. Product teams operate inside well-defined guardrails without needing to understand the full stack beneath them.

Where to start Greenfield: begin with the ALZ-Bicep/Terraform accelerator to establish the management group hierarchy, then layer the AI Hub subscription on top. Brownfield: the AI Hub subscription can connect to an existing hub VNet — a full ALZ implementation is not required.

Every spoke onboarded, every model registered, every policy tightened makes the platform more capable and trusted. That is the return on the upfront investment.

Comments